Upcoming Events

SF & Bay Area

Thu, Aug 22: 🧠 GenAI Collective 🧠 Marin AI Aug Co-Working

Thu, Sep 5: 🧠 GenAI Collective Marin 🧠 The Scaling Salon

🗓️ Hungry for even more AI events? Check out SF IRL, MLOps SF, or Cerebral Valley’s spreadsheet!

NYC

Tue, Sep 10th: 🧠 GenAI Collective NYC 🧠 Research Roundtable

Tue, Sep 17th: AI Founder Social - Lynx Collective x Gen AI Collective

Are you a researcher or founder pushing the boundaries of AI in NYC? We invite you to be part of our research roundtable! Share your groundbreaking work, engage in thought-provoking discussions, and connect with fellow innovators. Reach out to us at nyc@genaicollective.ai to learn more.

Miami

Thu, Aug 29th: 🧠 GenAI Collective 🧠 Miami Kickoff 🌴

This week, we are excited to share an insight piece from our very own Aqeel Ali who leads our community building efforts through internal code and no-code projects to streamline operations and boost community engagements. Aqeel dives into the escalating “LLM Wars” with the release of Llama 3.1, Mistral v2, and GPT-4o-mini.

AI Model Wars

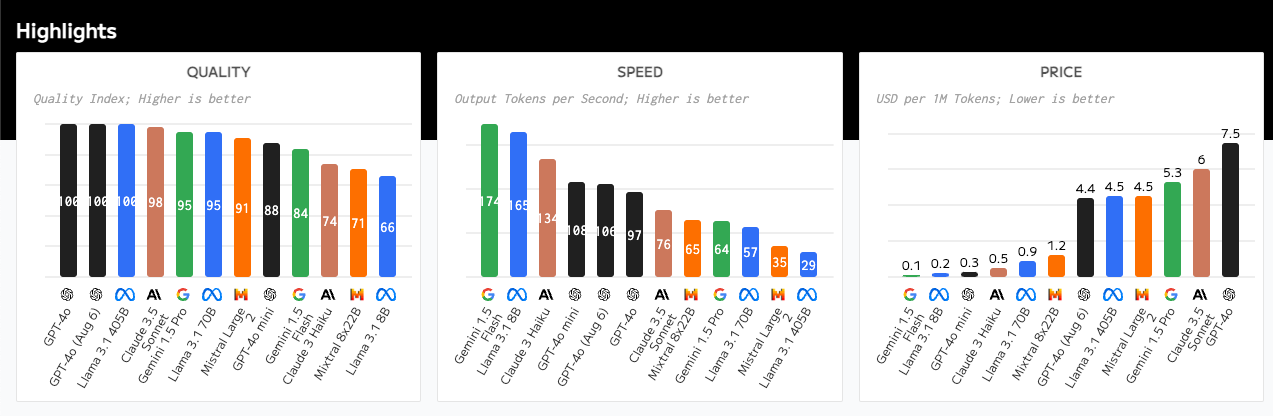

In this week's edition, we're excited to bring you a critical analysis of recent developments in the AI landscape, where competition among state of the art models is intensifying. The release of Llama 3.1, Mistral v2, and GPT-4o-mini signals a new phase in what we can aptly call the "LLM Wars." These advancements are reshaping the AI ecosystem, with implications for cost efficiency, model performance, and the future of generative AI applications. We’ll also touch on the unexpected emergence of Flux 1, a formidable contender in the generative visual space, challenging the likes of Midjourney and DALL·E 3.

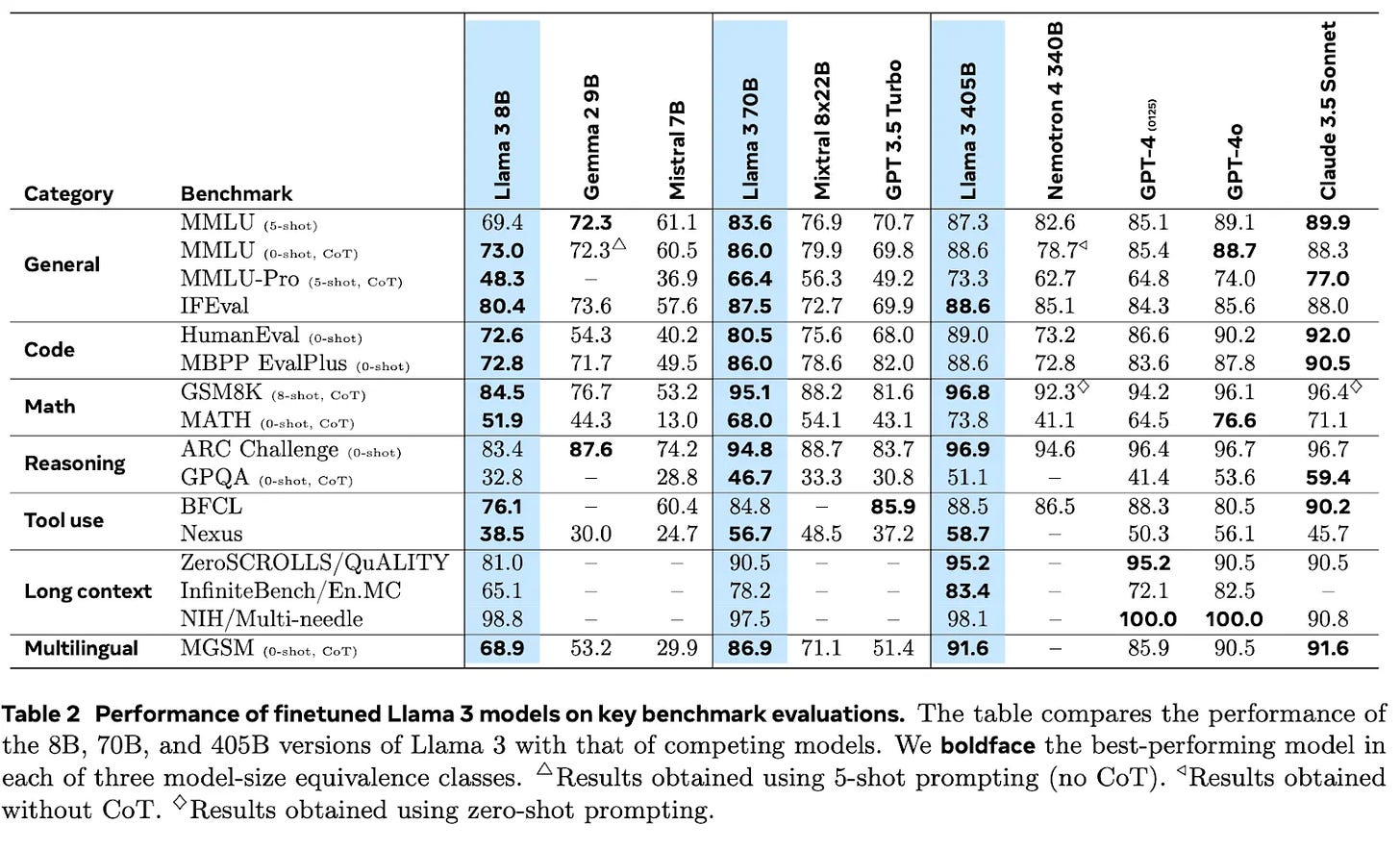

Llama 3.1: The Giant's Footsteps Send Earthquakes

Llama 3.1, Meta's latest release entered the AI arena, and the impact is undeniable. The release includes a range of efficiently-sized models—8B, 70B, and 405B parameters—making it possible to run models comparable to the giants of OpenAI and Anthropic on more accessible hardware (and reduce reliance on their platforms). Llama 3.1 has broken records across benchmark evaluations, outpacing state-of-the-art models in every category, and it offers powerful multilingual capabilities, an extended context window, and, best of all, an open model license agreement. Perhaps most exciting: this model lowers the barrier to entry, enabling developers and businesses to capitalize with less reliance on Anthropic or OpenAI’s platforms.

This unprecedented combination of accessibility and performance has resonated deeply with the community, winning the hearts of developers and founders across the ecosystem.

Mistral Large 2: The Silent Powerhouse

Mistral AI's release of Mistral Large 2 (ML2), a 123-billion-parameter model, delivers near top-tier performance with a leaner, more efficient architecture, making it ideal for commercial applications where deployment efficiency is critical. For example, think of situations where AI needs to run on devices with limited processing power, like smartphones or IoT devices, where real-time responses are crucial (eg: sales reps in the field or folks in healthcare). ML2’s efficiency means it can deliver powerful AI capabilities without draining the battery or requiring expensive, high-end hardware. Despite competing head-to-head with giants like GPT-4 and Llama 3.1, ML2’s more restrictive licensing and limited fanfare have raised questions about its broader impact. While ML2 excels in specific areas such as reasoning and accuracy, its potential to drive innovation might be constrained by its accessibility, particularly in a market where open-source models have traditionally thrived by encouraging experimentation and community-driven development. The model’s lean size and efficiency are significant strengths, but they may not be enough to overcome the challenges posed by its limited availability and the overshadowing presence of more widely adopted models.

GPT-4o Mini: Cost-Effective Performance

Shortly after Llama 3.1’s announcement, OpenAI responded with an unexpected announcement of GPT-4o Mini, repositioning itself as the provider of the most cost-efficient alternative in the LLM space. While OpenAI has kept the exact size of this model under wraps, it's speculated to be in the same tier as Llama 3 8B. The model maintains respectable performance, particularly in coding and mathematical reasoning tasks, while offering significant cost reductions. Priced at just 15 cents per million input tokens and 60 cents per million output tokens, GPT-4o Mini is dramatically cheaper than GPT-4o, making it an attractive option for developers with budget constraints. The ratio of savings is substantial—GPT-4o Mini is 33 times cheaper for input tokens and 25 times cheaper for output tokens—especially for longer projects. Moreover, feedback from builders suggests that GPT-4o Mini is significantly faster than open-source competitors due to OpenAI's optimization of their backend, making it much easier to work with compared to trying to optimize other models for your own infrastructure. This strategy has democratized streamlined to advanced language models, making some of the most powerful AI technology available to broad audiences.

Flux 1 by Black Forest Labs: A New Contender Steps into the Ring

Bursting onto the scene out of stealth, Flux 1 has entered the generative ai arena as a fierce competitor, showing performance comparable with industry titans like Midjourney's new v6.1 and OpenAI's DALL·E 3. Developed by the experts behind Stability AI's open-source models and diffusion techniques, Flux 1 has garnered significant attention with its recent launch and $31 million funding announcement led by a16z. Flux 1 offers three distinct variants—FLUX.1 Pro, FLUX.1 Dev, and FLUX.1 Schnell—each tailored to different use cases. This model’s open-source nature, combined with an accessible user interface, positions it as a formidable challenger in the creative AI space. As highlighted in TechCrunch's coverage, Black Forest Labs, the startup behind Flux 1, is also collaborating with xAI to power Elon Musk’s AI image generator, further showcasing the model’s potential to disrupt the industry by winning a key distribution channel . The emergence of Flux 1 not only highlights the potential for open-source innovation but also signals a shift in the balance of power within the generative AI ecosystem. FLUX.1 offers superior image quality with a focus on technical precision, making it ideal for applications requiring photorealism and detail.

Meta’s Brilliant Strategic Play Behind Open Source AI

As the LLM Wars heat up, Mark Zuckerberg’s decision to open-source Llama 3.1 isn’t just about “fostering innovation in the community”—it’s a brilliant strategic move. Meta “isn’t in the business of computing” like their frenemies Google, Amazon, and NVIDIA. Instead, they’re focused on building an ecosystem where AI developers and businesses thrive, driving more engagement and revenue through their core platforms. By lowering the barriers to entry, Meta ensures that more developers use their APIs, more new businesses rely on their ad networks, and general consumer activity on their platforms increases, all while solidifying their dominance in the AI landscape. Millions of developers are now unlocked for much faster innovation, collective intelligence (e.g. optimizations, model variants, training techniques). For example, see this week’s “Hermes 3” releases by Nous Research which has topped out the base model on AGI Eval.

Meta’s open-source strategy is far from a simple act of generosity; it’s a calculated effort to consolidate power and influence. And let’s be honest, offering groundbreaking tech for free does wonders for public favor and likeability—especially when your reputation could use a little polish.

Why should the ecosystem celebrate these developments?

Competition is great for the AI ecosystem, and the current phase of the LLM Wars promises continued foundational model innovation, less vendor lock-in, and, most importantly, lower prices. The days of larger and larger models may be coming to an end with key players focusing on small language models (<20B parameters) that bring greater flexibility, ease of use, and cost efficiency to the application layer. You don’t need a sledge hammer to crack a nut, and the ecosystem has found that the same holds true when building for most AI use cases. Let’s hope this competitive trend continues because, if so, the widespread adoption of GenAI we've all been anticipating could soon become a reality.

Events Spotlight

GenAI Collective x Æthos 💼 Future of Life & Work in the age of AI

The GenAI Collective is officially LIVE in Boston! Hosted jointly with Æthos and NextStep Health, we had an enlightening evening discussing a variety of topics related to the future of life and work, including everything from how AI is changing the nature of education to how it's being used to fight polarization and strengthen democracy!

The GenAI Collective x Headline 💸 How to Pitch Your AI Startup to Investors Panel

We had an amazing panel that demystified the process of fundraising and provided valuable insights to the entrepreneurs in our community looking to fundraise in this hyper competitive market. We appreciate everyone that joined us in attendance for the active participation and engaging discussion. Huge thank you to our VC panelists: Sasha Krecinic (Headline VC), Amber Illig (The Council), and Danielle Jing (Pear VC) for joining the panel, and special thanks to our co-hosts, Headline, for sharing their beautiful office space with us and making this event possible.

Gen AI Collective NYC Executive Dinner with Pitango VC and Comet ML

Huge thank you to all the attendees and organizers of the Gen AI & Enterprise innovation executive dinner. The dinner featured in-depth discussions, and passionate debates about the future of industry with current AI founders and leaders. The energy in the room was contagious with everyone chiming in on how companies are using GenAI to solve domain-specific problems. Shoutout to our NYC Steerco team (Samantha and Randy) for helping make this happen along with Roy Nissim and Aviv Barzilay for inviting us along as co-hosts.

Join the GenAI Collective team! 👷

This is a true labor of love and we are always looking to involve new leaders who share our passion for community building!

Does this sound like you? Learn more and reach out to us here!

About Aqeel Ali

Aqeel is currently Chief Operations Officer at DeepAI. At The Gen AI Collective, he leverages his generalist experience to build systems with a focus on increasing community value creation and leveraging organizer efforts. When not immersed in the world of AI, startup operations, or crafting satirical jokes, Aqeel delves into psychology and human creativity. 🧠